The crucible of conflict in Ukraine is teaching many things to those willing to look, listen and learn. Almost at the pinnacle of the hierarchy of lessons learned lie multiple aspects of drone warfare – fast becoming a warfare domain in its own right.

Sensors, propulsion, data processing and dissemination, effectors and payloads – all exhibit persistent tendencies towards innovative solutions for perennial battlespace challenges. Cutting through the marketing hype and misleading propaganda that surrounds the two-year-old conflict, however, is not that simple. Especially when it comes to the question of platform autonomy.

The confusion is not helped, of course, by the fact that the very word ‘autonomy’ has different meanings for different stakeholders. For those whose first language is not English, the word can indicate the available range of action or endurance for a platform – parsing the question to an unmanned aerial vehicle (UAV) context; for others it indicates a degree of organisational or regional political independence. Others see it as indicative of an ability for independent thought, reasoning or action. From the perspective of UAVs, both the endurance and the independent action interpretations are relevant and, though distinct, are allied to a degree.

One of the principal motivations for development of early UAVs lay in the ability to project effect to a distance. That effect could be to prosecute an intelligence, surveillance and reconnaissance (ISR) mission – to determine “what is on the other side of the hill” and provide tactical level commanders with data and support for improved decision-making – or it could be to deliver kinetic effect on a target. The question of effective range, flight endurance and security of control and communication signals therefore became of paramount importance. Interpreting manufacturer data, reading specifications and requirements couched in procurementese and separating the wheat from the chaff in international reportage of ongoing events, the word autonomy when applied to UAVs can often be substituted for range, endurance or loiter time. “An autonomy of x hours” is a frequently seen capability label.

Credit: Anduril Industries

That is not unimportant. Combat operations in Ukraine are showing an imperative need for delivering effects at range – and for reducing the time to bring effects to bear, which in turn is influencing platform development. One of the key concepts underlying the development of the Anduril Industries Roadrunner UAV, for example, is exploitation of a high ‘dash speed.’ With a high subsonic speed capability, the system’s tactical utility is considerably enhanced, enabling an effect to be delivered to a point of need significantly faster than is normal in ‘traditional’ operations. That enhances the autonomy of operations, from the perspective of the one definition.

However, it is the other definition that is far more prevalent when discussing the unmanned system domain. The capability to prosecute a mission – and, where possible, adjust the mission parameters in line with evolving circumstances – in an independent, or at least semi-independent manner, with little or no human intervention represents the Holy Grail for the unmanned systems community. This is the goal to which vast resources and intellectual capital are being directed with varying results and a host of longer-term issues arising that will require resolution sooner rather than later.

The issues fall into two broad categories – operational and political. The operational issues, while thorny and complex, are more easily dealt with in good military fashion, by breaking a complex issue into its component parts and resolving each sub-issue in sequence. The political issues require a more subtle and cohesive approach.

Autonomous operations

In most cases, the operational issues surrounding autonomous capability revolve around controlling independence of action. That may sound counterintuitive but take, for example, the case of a combat reconnaissance unit commander with a number of UAVs at his disposal and a mission to determine hostile dispositions and intent at a range of, say 30 km. Some of his platforms are equipped for ISR, while others have weapon systems. Some of his concerns will revolve around whether his ISR assets are sufficiently reliable to be able to pass targeting information directly to the weaponised aircraft, effectively cutting him out of the decision loop.

Credit: DefendTex

The concept of the Observe, Orient, Decide, Act (OODA) loop was developed with combat operations in mind, though it is increasingly applied to non-military organisational constructs. It was developed, however, with Homo sapiens in mind as the thinking machine that would conduct all four of those activities. Libya, Nagorno Karabakh, Syria, Gaza and Ukraine have all, to greater or lesser degrees, begun to change militaries’ approach to the concept. Intelligent machines are capable of conducting all four constituent activities in certain contexts, and – subject to carefully developed guidelines and algorithms – doing so in a safe, reliable and repeatable manner.

The implications for autonomous action in aerial platforms for military and security applications are enormous. Effect at range hugely expands the areas of interest that can be monitored and/or protected, whether that area is the immediate environs of an airport or the whole of the Andaman Sea for example. The ability of a high-altitude UAV monitoring an area over weeks or months to determine changes in ‘patterns of life’ and generate appropriate alerts is valuable. Some would argue that its ability to make decisions as to what to do about the changes observed would be equally valuable – and, in saving time and avoiding the uncertainty of the human decision-making process, potentially saves critical time and saves lives. Others believe that is a bridge too far.

Scrutiny, suspicion and safety

The era in which UAVs have developed and become so prominent has coincided with a time when the general public has been granted unprecedented access to information and communications capabilities, with the inevitable consequence that debates become far more frequent, and encompass far more divergent points of view than was previously possible. One consequence is increased public scrutiny of action (or inaction) by ‘the authorities’ and nowhere, arguably, is this more prevalent than in politics, with international relations and the use of military power very high on the agenda of scrutineers. Those who subscribe to conspiracy theories see malicious intent at the heart of every action and many have seized on fears that intelligent systems might overrule human intent and cause mayhem on a global scale.

Although science fiction to a degree, there remains a kernel of reality at the heart of such fears. But what most ill-informed commentators miss is the fact that autonomy has not emerged, butterfly-like, from a recently woven cocoon: it has been developed and considered, experimented with and modified, for decades. In all unmanned system domains, shrewd manufacturers and concerned authorities have debated, discussed and collaborated in the development of operational architectures that meet the rigid constraints of operational safety and enduring human control. The fact that we are only now seeing the emergence of routine UAV operations in airspace populated by manned aircraft is a testament to the 20-plus years it has taken to consider and frame the regulatory environment in which such operations must take place.

Credit: HAJIM School of Engineering & Applied Sciences, University of Rochester

On the battlefield, such considerations are secondary. The imperatives are different. Yet, keeping a ‘human-in-the-loop’ capability for ultimate authority over the use of deadly force remains a powerful component of the argument for allowing unmanned systems to operate relatively independently. Rheinmetall’s announcements of developments in unmanned systems on the ground and in the air are peppered with assurances of the primacy of human authority in all circumstances. AeroVironment emphasizes the positive control of ISR drones and unmanned combat aerial vehicles (UCAVs). Developers of software and analysis algorithms focus potential customer attention on the multiple safety precautions built in to every new release. Concerns, however, persist.

Where is autonomy going?

Questions remain as to the direction autonomy is headed – how will we reconcile the multiple requirements and make efficient, effective use of the wide range of capabilities we have unleashed through unmanned systems? How do we ensure the primacy of human initiative and limit the inexorable march of robotic systems?

In the long term, there would seem to be few acceptable answers, since the complexities of the surrounding issues are explained to very few and adequately understood by even fewer. There have been dangers inherent in every development in human history and we should not fool ourselves that the development of machine autonomy is free from all potential for disaster. In reality, however, we already live with the benefits stemming from degrees of autonomous machines conducting everyday activities. Driverless trains have become a familiar sight in London’s Docklands and many other cities. Flight crews tend to monitor airliner activities for an astonishingly high percentage of every passenger-carrying flight, intervening for mere minutes at each end of the flight segment. They are, of course, still poised to intervene immediately in the event of systems failure. Telesurgery is already saving lives in circumstances in which medical intervention might otherwise be impossible. Defence and security, though, are different.

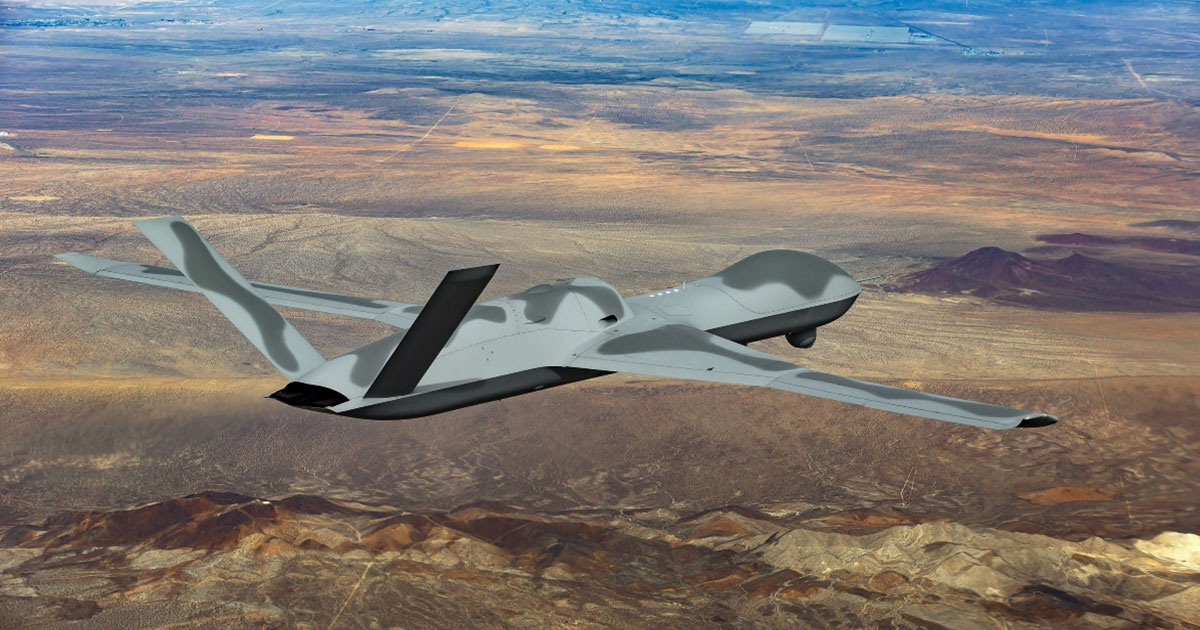

Credit: GA-ASI

One aspect of the conflict in Ukraine that concerns some observers is the extent to which the desire for instant gratification bypasses the need for caution in capability development. Ukrainian authorities and frontline troops have been hugely innovative in jury-rigging, adapting and cajoling existing systems into doing things they were not originally designed to do. Examples include civilian UAVs being repurposed for dropping grenades. Yet little attention has been paid to the potential for disaster which, despite dire predictions, does not yet appear to have happened. Absolute safety has been sacrificed on the altar of immediate effect – a trait common throughout the history of warfare. Popular concerns centre on the spectre of large-scale ‘collateral damage’ resulting from decisions taken at a literally inhuman level.

There are, however, powerful minds at work in charting the future course of autonomy. Sensor and computing behemoths such as Hensoldt and Microsoft are working on development and integration of capabilities that will make the routine operation of ever more capable robotic systems possible. Companies large and small – from Lockheed Martin and BAE Systems to Milrem Robotics and Anduril Industries – are developing powerful capabilities that will make swarming attacks, and defence against them, viable considerations for aerial combat in the immediate future.

Credit: US Army

The way forward, at least for now, may well be a continued evolution of what we have seen in the combat aircraft avionics environment in the last four decades or so. Early iterations of advanced combat cockpits provided sensor output for human interpretation, decision and action; the glass cockpit advanced the method by which machine-derived data could be presented for aircrew intervention; the F-35 cockpit, as an example, takes this a stage further, with the sensor fusion relying on sensors and computing power to collect, analyse and parse data, and then offer the pilot a series of choices. This all saves time and eases the cognitive load of the pilot, thereby shifting the cognitive focus onto decision-making rather than analysis. Whether that speaks adequately to the human need to feel in control remains to be seen.

The bottom line is that this genie is too good (and too independent) to be put back into the bottle. Autonomy in unmanned systems is here to stay and, indeed, is pivotal to the much-hoped-for success expected of them in short and medium terms.

Tim Mahon